Yes, that’s how it’s intended to work. Someone (me, @blacey if he’s willing, or someone else) will need to write an eZLO interface, but MSR is designed to work this way. It uses an object to interface with a system or API, and that object publishes the objects it sees/finds/knows into the Reactor environment. Currently, there is an object called VeraController that you can configure with the IP address of your Vera or openLuup system, and it publishes all devices, rooms, and scenes into MSR’s environment. There is a HassController, and HubitatController, as well. There is a CameraController, for which you provide via configuration the IP address, URL, and auth info for your cameras, and it publishes camera objects into the MSR environment. A WeatherController is configured with your location and account information and draws data from weather APIs to populate weather objects. The future will surely include an eZLO interface object, and as @therealdb offered, objects for direct interface to Shelly and Tasmota (so you could see and control these devices directly, without having to go through an extension on Vera/Hass/HE/other). There is no reason the future could not include a ZWay and/or OpenZWave interface object as well.

The current Vera Reactor has a device replacement tool that appears on the Tools tab when a device specified in conditions or activities is no longer available. This will “grow up” into a more general utility for system-wide device replacements. I am also playing with an idea for temporary replacement of a device (“when this device is referred to, use this one instead for now”), so you can trial-run replacement devices or just quickly patch past a device that has failed (e.g. if a bedroom temperature sensor fails but the thermostat reading is good enough until you get it fixed/replaced).

For current Reactor users, my plan before first public release is to have an import tool that takes the configuration of a Vera-based ReactorSensor and transforms it into MSR’s form with devices mapped to MSR entities. The formats of the configuration data between the two systems are, as you might imagine, very similar, mostly just changes in field names and some light cleanup of the structure to simplify the code in both the UI and Core. It will probably take more time to make the UI for that feature than the feature itself.

That’s right, since devices don’t really identify themselves uniquely in a portable way. That is, Vera has its device IDs, and UDNs (the rarely-used UIDs associated with a device), but if you exclude a Vera device and include it on a C7, none of that data follows the device, so there’s no way to know it’s the same physical unit just moved between controllers. Rather than trying to shoe-horn some kind of unique identifier overlay on both systems, it just seems easier and cleaner to provide a migration tool (“that device is now this device”) that you run once for the device and have done with it. I don’t want people to have to think about UIDs, and really as much as possible, any kind of IDs at all.

ReactorSensors, and yes, as I said above, it will be easy to bring it in, if you wish. There’s also no reason not to leave an automation on the Vera, if that’s what you want to do. Any system is going to come with certain steps that have to be taken to be functional, but I’m trying to minimize these as much as possible, so that any and all migrations are done on the user’s terms and at their leisure. I hate having software force me to change a working environment when I’m new to it and trying to learn how it works, or if I even want to use it. My goal is to make MSR something you can put to work immediately, but if it’s not for you, it hasn’t completely borged your existing setup. So with respect to the importing of ReactorSensors, for example, you might just import your RS into MSR, but disable, not delete, the ReactorSensor, and let MSR take over. If you later decide not to use MSR for that function or at all, you just disable MSR’s rule and re-enable the ReactorSensor. No fuss. No backup/restore or anything that angst-inducing.

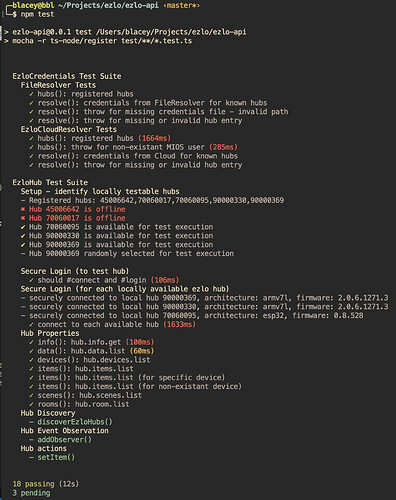

Docker anywhere, not just Pi, and that gives it a lot of systems to go it. But it will runnable on systems that aren’t docker-friendly, either. Really the key requirement is nodejs. I’m trying to keep the other dependencies to a minimum. So as I said elsewhere, Windows and MacOS will be good, too.

The issue for the hubs is what’s allowed to run, and that each hub is a completely different architecture, even within the brand (as Vera shows with Edge vs Plus vs Secure, and eZLO with Atom, PlugHub, and the rest). Again, I mentioned above that I’m toying with building a package for Edge/Plus/Secure if I can get nodejs and the dependencies resolved, both so that it’s an easy install/run for any existing Vera user, but also as a way of giving the hardware a second life (long after you migrate off Vera, it could still be sitting there chugging away as your MSR box and be just fine). But nodejs runs in a lot of environments, so there will be lots of choices. The docker containers will be the fast way for the most common, but the ZIP archive is always the swiss-army knife of installers.

Here’s how I think about it. Let’s take the unmentioned first…

Why make MSR when there’s Node-Red? For starters, I love Node-Red, but the fact that I love it may be something of a red flag. It’s a technical product and requires some pretty technical thinking. In a sense, it’s really like the difference between Reactor and PLEG. PLEG is a great and capable tool, but not everybody gets it, and for those that do, the learning curve can be pretty daunting. I’ve always tried to keep Reactor approachable, although it has clearly grown from simpler, nascent beginning. But still, I think most people get it. So I think there’s room in the HA ecosystem for another logic/rules engine.

Second, the most consistent thing I see in the HA marketplace is that each manufacturer’s rules engines are a disappointment to their users at some level. Vera, of course, never evolved a serious rules engine, probably because PLEG came early enough to take the real pressure off the engineering (or marketing) team to get it done. As promising as Hubitat seems, and as much as many people like its Rules Engine, their forums are like this one in that there are lots of posts of bugs, confusion, etc., and even the lead of that subsystem regularly admits is not everything they wish it could be. HomeAssistant… well, writing automations in YAML should, in my non-serious opinion, be considered a human-rights violation. Their GUI, Automation Editor, isn’t nearly up to snuff. So again, I think there’s room for a system that’s not just cross-platform, because maybe you don’t need it to be, but it presents a friendlier and more approachable environment in which to write automations than that which is native to the platform you’re using, be that many or just one. Choices. More choices.

Third, I think cross-platform is not everyone’s concern, but can be an issue for many people because no hub natively supports everything well – another consistent inconsistency in the HA marketplace. To that end, I’d rather have the option of using the best tool for the job, rather than accepting a compromise of 60% performance on thermostats because the platform otherwise satisfies 95% of my device compatibility needs. That’s a horrible trade-off. If eZLO becomes as awesome at ZWave as Melih touts, then let eZLO be the go-to hub for ZWave, but that should not stop you from using Shelly or Tasmota or ESPHome or Tuya devices just because the available (or not) eZLO plugins for those APIs aren’t up to par.

Finally, it’s not going to be least-common denominator. That may be the default view to keep things simple and for the majority of what users routinely use, but it is my unwavering goal to preserve access to as much as possible of the source environment. What you don’t see much of in the video is that any device from the Vera, for example, can be marked for “full data”. That means that device would bring over all of the Vera state variables defined on it, whether those map into a defined MSR capability or not, and you can use them in automations. That works today. I didn’t take the time to cover it in the video, it was already getting too long, but the way I’ve done Sonos plugin devices so far is a good example: there are six predefined capabilities it uses: av_transport, media_navigation, muting, volume and av_repeat. Clearly not everything you can do with Sonos, or the Sonos plugin enables you to do, is covered by these six, but they represent the majority. The rest are accessible just by tagging the device for full data, and you can do that for all devices, or devices of a certain class/criteria, or just a single device for which you have a specific need. It is also possible (today), entirely through configuration, for a user to define a new capability and add it to an existing entity (so you can make “site-specific” capabilities), and even create custom mappings of devices to entities. For example, at the moment there is no device mapping for the DSC Alarm Panel plugin, but you could sit down and create it in your local config, without writing any code or waiting for code support from me, and make the system publish entities sourced from that device so you can see states and perform actions.

So, that’s a bunch of words that basically come down to this: more choice, more approachable.